AI Enablement Engineer: The Highest-Leverage Role in Tech

Every decent team has one. Maybe more than one. The AI trailblazer. The person who figured out how to multiply themselves earlier and faster than everyone else, and then started showing others the ropes.

You know who they are. They're the one who got you on Claude Code. The one who set up CLAUDE.md and AGENTS.md across all your repos. The one who wired up the GitHub Actions integration before anyone asked. The first person who figured out exactly how Codex was complementary to Claude Code and shared the workflow. The one who dropped that post on context engineering in Slack and said "read this, it changes everything." The person who set up OpenClaw on their Mac Mini at home before you'd even heard of it. The one with multiple agents running 24/7 on a machine under their desk.

That person might not know it yet, but they might be the most valuable person in your company right now. Not because they're operating at 20x, though they probably are, but because they have the potential to lift everyone else.

The role that doesn't exist yet

Every wave of technology creates a role before it creates a title. DevOps existed as a practice at forward-thinking companies for years before it became a job posting. "Data engineer" was just "the person who writes ETL" until the modern data stack gave the role definition and dignity. "Analytics engineer" was something people did quietly at their desks until dbt gave it a name and a community.

We're at that exact moment with AI. The function exists everywhere. The title doesn't.

I'm going to call it AI Enablement Engineer.

Not because the name is perfect, but because the two most important words are right there: enablement and engineer. This role isn't about using AI. It's about enabling others. It's not a management function or a strategy exercise. It's engineering. Hands-on, technical, relentlessly practical.

What AI Enablement actually means

It's easy to confuse this role with adjacent ones, so let's be specific about what this person does.

An AI Enablement Engineer doesn't just build agents for themselves. They don't just vibe-code prototypes. They don't write whitepapers about AI strategy. What they do is practically accelerate AI adoption, whatever that means for their organization on any given week.

Maybe this week it's breaking through a policy boundary that's blocking the team from using a frontier model. Maybe it's advocating for, or setting up, an enterprise plan with Anthropic or OpenAI. Maybe it's writing an MCP gateway so agents across the company have access to internal tools. Maybe it's fine-tuning a code review bot. Maybe it's setting up AWS Bedrock as a fallback for when Anthropic's servers are overwhelmed. Maybe it's pushing the people still on GitHub Copilot to give Claude Code a real shot.

The common thread: ungating multipliers for others.

None of this is "building AI agents" in the way most people think about it. It's making AI work for people who wouldn't otherwise get value from it. That's the distinction.

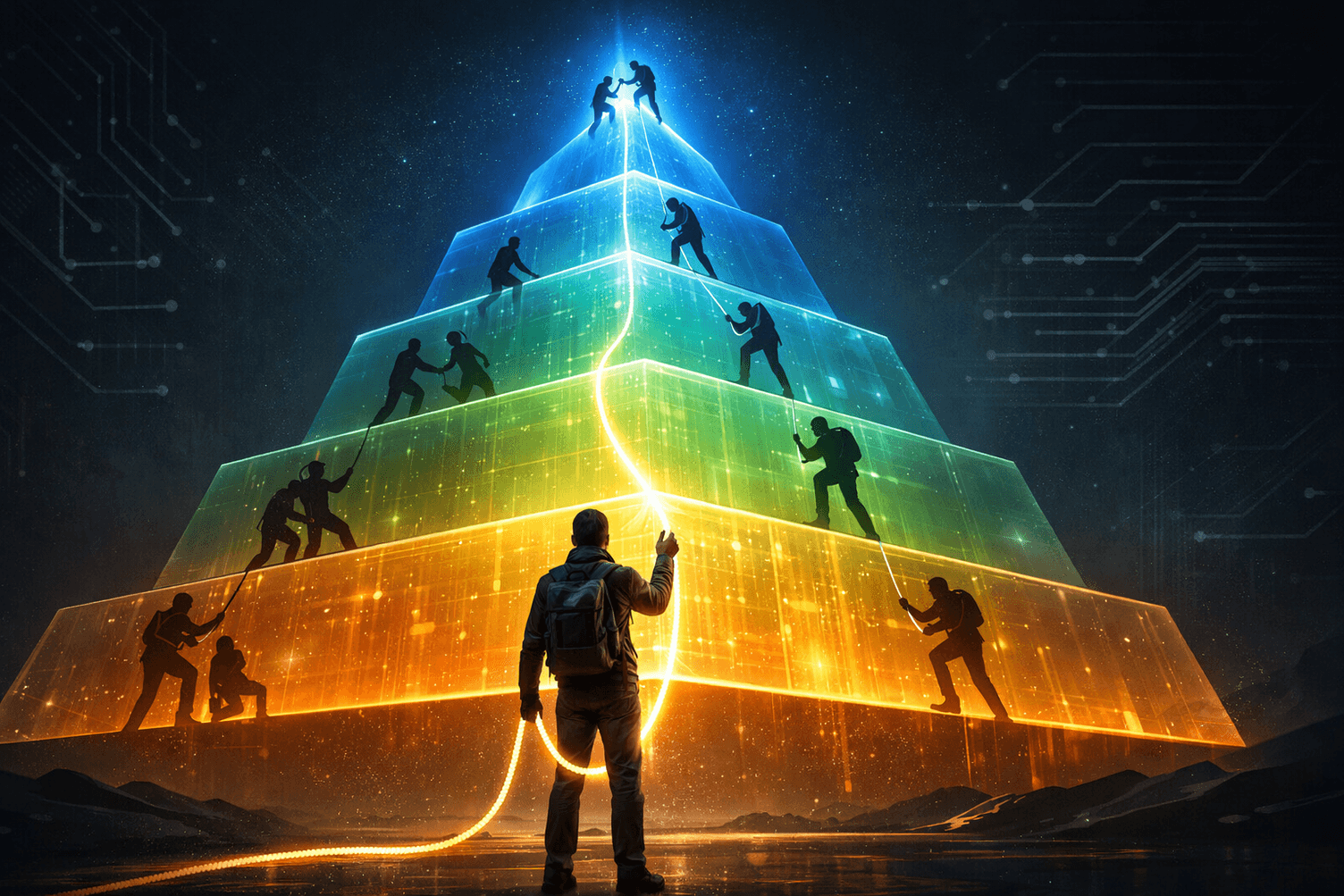

The AI Enablement Pyramid

AI enablement isn't one thing. It's a progression. Every organization is climbing it, every person on the team needs to be welcomed or dragged up it, and the AI Enablement Engineer is the one holding the rope.

Level 1: Access & Policy. Enterprise accounts with frontier model providers. API keys provisioned. Usage policies that don't block productivity. If your organization is still stuck here in 2026, it's time to move the fuck on. Most tech-forward companies crossed this threshold last year. It's table stakes.

Level 2: Context Engineering. This is where agents start becoming genuinely useful. CLAUDE.md and AGENTS.md in every repo. Eventually a context/ directory with architecture docs, coding conventions, domain knowledge. The shift from "AI that generates code" to "AI that understands our codebase and our decisions." Most teams that feel like they're getting real value from AI have reached this level.

Level 3: Workflow Integration. Code review automation. CI/CD hooks that trigger agents. The PDLC starts shifting. You can feel the bottlenecks moving: code generation isn't the constraint anymore, it's review, testing, deployment, coordination. Rituals start changing. Standups sound different. Sprint planning accounts for agent throughput. This is where most AI-forward teams are right now, and where the AI Enablement Engineer's work starts becoming visibly high-impact.

Level 4: Tools at Scale. Going all out on MCP servers and skills. Connecting agents to internal APIs, databases, documentation, ticketing systems, Slack, Notion. Every tool the team uses becomes accessible to every agent. This is where the "let me hook you up" part of the job gets serious. The AI Enablement Engineer is now building infrastructure, not just configuring tools.

Level 5: Composition. Here's where it gets interesting. You realize that tools don't just add up, they multiply. An agent with access to your codebase AND your ticketing system AND your documentation can do things that none of those integrations could do alone. MCP and skill composition becomes the focus. You also hit a wall: context duplication, maintenance burden, configuration drift across repos and teams. Solving this is real engineering work.

Level 6: Memory & Proactivity. Agents that remember across sessions. Agents that run on schedules, not just when someone prompts them. Proactive work: a code review bot that flags issues before anyone asks, a documentation agent that keeps docs in sync with code changes, a triage agent that categorizes incoming issues overnight. The shift from "AI responds to me" to "AI works alongside me."

Level 7: Special Purpose Agents. The apex. Context, skills, tools, goals, memory, and workflows assembled into purpose-built agents for entire functions. Not a generic coding assistant with some MCP servers attached, but a legal expert that understands your contracts, your redline history, your guidelines, and your risk tolerance. A data assistant that knows your schema, your metrics definitions, your dashboards, and who typically asks what. A product agent that lives in your ticketing system and understands your roadmap, your customers, and your engineering constraints. Each one is a coherent unit: the right tools, the right context, the right memory, the right workflows, working together.

Most organizations are somewhere in levels 1 through 3. The AI Enablement Engineer's job is to keep pushing everyone up. If the upper levels feel slightly foreign to you right now, good. That's the territory where the biggest multipliers live, and it's closer than you think.

What the top of the pyramid looks like at Preset

Levels 5 through 7 are where it stops being abstract.

At Preset, we've built several special-purpose agents that serve entire functions across the company. Not chatbots. Not RAG pipelines. Not thousand-line system prompts. Each one is a composition: the right MCP servers, the right skills, the right API keys, the right documentation, the right memory, all assembled into a coherent unit that genuinely supports real work.

DatAgor is our data assistant. It has access to our data warehouse, our metrics definitions, our dashboards, our schema documentation, and our dataeng repo where all our dbt models and Airflow DAGs live. It can infer lineage, understand the pipelines end-to-end, run SQL through Superset's MCP server, and pull in data warehouse documentation on the fly. Anyone in the company can ask it questions about our data, get queries written, explore dashboards. But it goes further: when it finds a data quality issue or a broken pipeline, it can spawn agents directly on the dataeng repo to fix or improve things. The data team didn't scale headcount. They scaled access. A product manager who used to wait for a data analyst to pull numbers can now get answers in seconds.

Saul ("Better Call Saul") is our legal expert. Connected to our contracts, redline history, legal guidelines, compliance docs. Instead of waiting three days for a legal review of standard terms, people just ask Saul. It knows our risk tolerance, our standard positions, our previous negotiations. It doesn't replace the legal team. It makes the legal team's expertise accessible at the speed the business needs.

Architect is our cross-repo intelligence layer. It maintains a service and repo registry, architecture diagrams, network topology, cross-repo workflows, and deployment schemes — all the "meta" context that can't live in any single repo. Ask it "how does observability work at Preset?" or "where exactly is the system prompt for the Preset Chatbot?" and it uses the GitHub search API to hunt across repos, stitch together the answer, and explain how the pieces connect. It's great for questions that span authentication flows, deployment pipelines, or any workflow that touches multiple services. The kind of context that used to live only in senior engineers' heads now lives in an agent that anyone can query.

PM is our product assistant, wired into our ticketing system. It does triage, answers product questions, surfaces context that used to require interrupting three people. It understands our roadmap, our customer segments, our engineering constraints.

Harbor is our newest, still in its training and learning phase. It helps with release management and customer rollouts: access to ticketing systems, GitHub, release crafting workflows. It nags on must-fix issues, helps cherry-pick changes, assembles release branches, and assists with deployment coordination. Not fully autonomous yet, but already useful enough that the release process is less of a bottleneck.

GitHub Handler is our org-level GitHub bot. It impersonates whoever calls it, scopes sessions to the PR or issue being discussed, and maintains continuity across conversations happening in GitHub. It learns, remembers, has access to MCP servers, can consult specialist agents like Architect, and coordinates other agents doing work in proper worktrees — all observable and post-promptable on its Agor board. Compared to ephemeral agents like Copilot or @claude that start fresh every time, this one carries context, has tools, and can spin up live shared environments for review.

Every single one of these is accessible from Slack or GitHub. That's the accessibility piece that makes adoption real. You don't need to install anything, configure anything, or even know what Agor is. You just ask a question in a Slack channel. The gateway to AI is wherever your team already works.

None of these agents would exist without a partnership between the AI Enablement Engineer and the subject matter experts who own each domain. The legal team knows their risk tolerance and negotiation patterns. The data team knows which metrics matter and why. The product team knows the roadmap and the customer segments. The AI Enablement Engineer brings the technical ability to compose the right MCP servers, the right document access, the right memory system, but the domain expertise that makes each agent genuinely useful comes from the people who live that function every day. The best agents are co-created, not handed down.

That's the AI Enablement Engineer's work: partnering across the company to turn institutional knowledge into something an agent can act on. Who else is thinking about this?

Blowing through the ceilings

This might be the highest-impact role in tech right now.

A great individual engineer using AI might be 5-10x more productive. That's impressive. But that's one person. The AI Enablement Engineer's job isn't to be 10x themselves. It's to make 50 people 3x. The math is obvious: 50 × 3x beats 1 × 10x, every single time.

One afternoon of setup, configuring an assistant, connecting it to the right data, deploying it to a Slack channel, creates a permanent productivity gain for an entire team. That's the kind of impact that used to require shipping a product. Now it requires one person who understands both the AI capabilities and the organization's needs.

If DevOps was about making infrastructure self-service, AI Enablement is about making intelligence self-service.

That said, the ceilings are real. Two in particular.

Agency and access. Every useful agent needs access to something: a database, an API, a ticketing system, a production cluster. The more access you give, the more useful the agent becomes — and the more damage it can do. An agent with write access to your HubSpot instance, your deployment pipeline, or your data warehouse is one bad prompt away from a very expensive mistake. The AI Enablement Engineer lives in this tension every day: "give agents access to everything they need" vs. "don't let them blow up production." The answer isn't to lock everything down. It's to build trust incrementally — start with read-only access, scope permissions tightly, expand as the agent proves itself. The same way you'd onboard a new team member, except the ramp is faster and the guardrails need to be more explicit.

Token chain reactions. Once agents can spawn other agents, costs and scope can spiral fast. A proactive agent decides to "fix" something overnight and kicks off a cascade of sub-agents, each burning tokens, each making changes nobody asked for. We've all seen the OpenClaw horror stories: an agent that decided to vibe-code a replacement for an entire internal tool while everyone was asleep. The solutions are emerging: token quotas per time window, spending limits, observability dashboards that show exactly what's running and what it's costing. Most providers now offer some form of rate limiting, and tools for tracking agent spend are getting better. But the AI Enablement Engineer has to be deliberate about setting these guardrails before turning agents loose, not after the first runaway bill.

The AI Enablement Engineer has to navigate both of these, not by avoiding risk, but by building the right scaffolding around it. The ultimate goal isn't to hoard the capability. It's to enable enablers: get every team obsessing about assembling the right combination of tools, context, and skills to change how their entire function works. Make multiplication contagious.

Why this role emerged now

Three things converged in 2025-2026 that made this role suddenly critical:

AI agents became genuinely capable. Claude Code, Codex, Gemini: these aren't autocomplete anymore. They write features, review PRs, debug production issues, draft legal analyses. The gap between "AI as a toy" and "AI as a teammate" closed fast.

The configuration layer exploded. CLAUDE.md, AGENTS.md, MCP servers, custom instructions, system prompts, context engineering: getting real value from AI agents requires meaningful setup. That setup is different for every team, every repo, every use case. Someone has to figure it out.

The gap between AI-native and AI-absent widened. Inside the same company, some teams are shipping 5x faster with AI while others haven't changed their workflow at all. Leadership noticed. "Why is Team A shipping so fast?" Almost always, the answer is: they have someone who figured this out and set it up for everyone.

That someone is the AI Enablement Engineer.

The toolkit problem

The AI Enablement Engineer's job is to enable others at scale, but their toolkit is designed for individual use.

Today, enabling someone means SSHing into their machine to fix a config file. It means pasting MCP server configurations in Slack DMs. It means saying "here, copy this CLAUDE.md into your repo." It means building an assistant that works great on your laptop and then struggling to make it available to anyone else.

You can't scale AI enablement through config files and screen shares.

What this role actually needs:

- "Let me hook you up." An API key, default configs, a working agent setup, provisioned for someone in minutes, not hours.

- "Let me see what's happening." Visibility into what agents are running across the team, what they're working on, where they're stuck.

- "Let me help you out of that corner." The ability to see someone's agent session and prompt them out of a dead end, without taking over their machine.

- "Let's make this available to everyone." Turn an individual assistant into a shared resource. Make that vibe-coded app accessible to the team. Promote what works.

- "Let's coordinate." Orchestrate multiple agents on complex work across branches, repos, and people.

This is what happened to me at Preset. Not by design, but by following the trail of highest impact.

It started informally. "Man, I gotta get my whole engineering team on Claude Code yesterday." That was last year. So I did it. Set up configs, wrote the CLAUDE.md files, showed people the workflows. Then the questions started coming: "How do I get it to understand our codebase?" "Can you help me set up MCP for our internal APIs?" "I built this thing but it only works on my machine."

Each week, I'd look at what would have the highest impact for the team that week, and it kept being some version of AI enablement. One week it was building a data assistant so the whole company could query our data through Slack. The next it was wiring up a legal expert so contract reviews didn't bottleneck deals. Then it was figuring out how agents should orchestrate other agents for complex multi-repo work. Then realizing the bottleneck wasn't code generation, it was code review and collaboration between humans and agents.

Every week, following the highest-impact trail. At some point, the progressive realization: this IS the job. This is the highest-impact thing I can do. Not writing code myself. Not even building agents for myself. Enabling everyone else to multiply.

But I kept hitting the same wall: the tooling for doing this at scale didn't exist. Everything was trapped in terminals, config files, individual laptops. I couldn't see what my team's agents were doing. I couldn't provision setups for others without touching their machines. I couldn't turn what worked for one person into something the whole team could use.

So we built it.

Agor: the control plane for AI Enablement

Agor is a multiplayer canvas for orchestrating AI agents. It elevates the AI configuration layer from individual laptops to a shared platform, a control plane where AI Enablement Engineers can actually do their job at scale.

On Agor's spatial canvas, you can see every agent running across your team in real-time. You can set up environments and configurations for others. You can coordinate multiple agents on complex work. You can turn individual assistants into shared resources.

Making AI collaborative at the team level isn't just about visibility though. It also means thinking carefully about boundaries. Most AI sessions benefit from being live and shared: your team should see what's being built, learn from each other's prompts, jump in when an agent needs help. But not everything should be visible to everyone. Agents working with sensitive data, HR workflows, security audits, contract negotiations: these need isolation. The real world isn't "everything shared" or "everything siloed." It's a spectrum, and the AI Enablement Engineer needs to manage that spectrum.

It's what I needed when I started doing AI enablement at Preset, and it's what every AI Enablement Engineer will need as this role becomes formalized across the industry.

We've open-sourced it because we believe, like Airflow and Superset before it, that this kind of infrastructure belongs to the community that uses it.

The call to action

If you recognized yourself in this post, the person at your company who's been doing this work without a title, you're an AI Enablement Engineer. The role is real. The impact is measurable. The ceiling is nowhere in sight.

The function is emerging right now, at every company that's serious about AI. Some will formalize it early and pull ahead. Others will wonder why their AI adoption stalled.

We're building the toolkit. Come help define the role.

- Agor on GitHub — star it, try it, contribute

- Join the Agor Discord — the community is small and early. That means you'll actually shape it.

- Agor Demo: Orchestrating 6 agents on Apache Superset — see what AI enablement looks like in practice

Maxime Beauchemin is the creator of Apache Airflow and Apache Superset, and CEO of Preset. He's currently building Agor, the multiplayer canvas for orchestrating AI agents.